I’ll help you master the complex world of memory settings so your computer runs at peak efficiency.

Small changes in timing values can make a real difference in how the module talks to the processor and how fast your system responds under load.

I will explain the number of clock cycles that a typical operation needs. For example, 3200MHz memory has a clock frequency of 3,200,000,000 cycles per second, which helps set expectations for raw throughput.

My goal is to demystify row and column operations, show how ddr5 standards use these settings, and why cas latency matters for gaming or heavy productivity.

By the end of this article, you’ll know how to choose upgrades that balance speed, stability, and real-world performance.

Key Takeaways

- I’ll guide you through core concepts that affect memory and system performance.

- Clock cycles determine how quickly data moves between module and cpu.

- Understanding row operations helps diagnose stability and speed issues.

- DDR5 uses timing controls to push performance while keeping systems stable.

- Cas latency is one key metric to consider when upgrading for gaming or work.

Understanding RAM Timings and CAS Latency Basics

To begin, I describe the tiny time slice that sets the pace for every memory operation.

Defining clock cycles

I define clock cycles as the basic unit of time that dictates how fast a memory module can answer requests.

For example, 3200MHz memory runs at 3,200,000,000 cycles per second. That number sets the raw speed of operations.

The Relationship Between Frequency and Latency

The link between frequency and latency is not always simple. As frequency rises, the real time in nanoseconds can stay close.

Cas latency is the number of clock cycles it takes ram to output data after a read command.

So a lower count often feels snappier even if the rated speed is the same.

| Spec | Value | Practical note |

|---|---|---|

| Frequency | 3200MHz | 3,200,000,000 cycles/sec |

| Cycle time | 0.3125 ns (approx) | Used to compute real time for an operation |

| Cas latency | Example: 16 cycles | Defines how fast data is returned |

- Frequency sells speed.

- Timings define real responsiveness.

- I focus on cycles so you can tune for real gains.

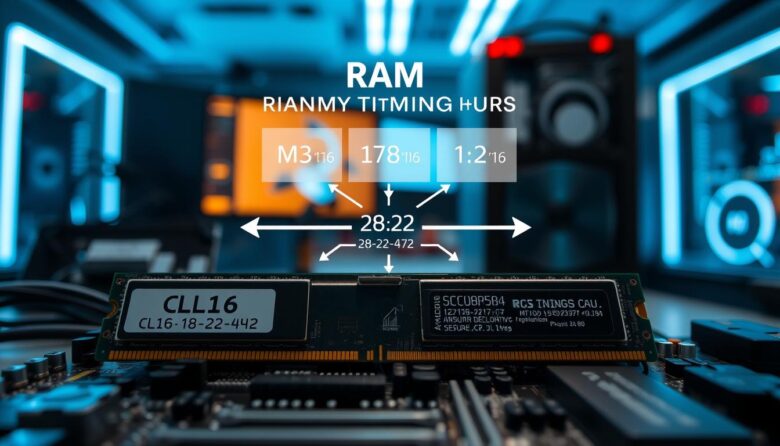

Decoding Primary Memory Timings

I break down the key numbers you see on a kit so they mean something useful for your build.

CAS Latency Explained

cas latency is the exact number of clock cycles it takes ram to respond after a column address strobe command. In plain terms, it is the delay from request to the first piece of data. Lower values often give a snappier feel.

Understanding Row Access and Precharge

The row-to-column delay, known as trcd, is the amount of time to open a row and reach the requested column. The row precharge time (trp) is how long the memory needs to close one row before opening another.

Command Rate and Stability

The command rate (1T or 2T) controls how many clock cycles the controller waits before issuing a command. A 1T setting can boost performance but may reduce stability at high speeds. A page miss often needs the sum of trp, trcd, and cas latency to fetch data.

“Understanding these numbers helps you pick a module that balances speed and reliability.”

- Example kit: 36-38-38-76 shows how four numbers work together.

- Many clock cycles are consumed during access; reducing them helps performance.

The Role of SPD and XMP Profiles in System Optimization

I show how onboard profile data tells your PC which safe memory settings to use during boot.

JEDEC defines baseline standards used across DDR4 and DDR5 hardware. Each stick contains an EEPROM that stores those safe entries the firmware reads first.

XMP (eXtreme Memory Profiles) makes it easy to push past JEDEC defaults. Most users get a clear boost in performance by enabling a profile instead of tuning values manually.

- I recommend checking the SPD tab in CPU‑Z to view available profiles and timings.

- Profiles are designed to keep your system stable while running at the advertised speed and cas latency.

- They do not list every possible timing, so manual tweaks can help for extreme tuning.

| Profile | Source | Purpose |

|---|---|---|

| JEDEC | JEDEC standard | Safe default speed and basic timings |

| SPD | Module EEPROM | Stores available profiles and module information |

| XMP | Manufacturer | Optimized performance profile beyond JEDEC |

“Enabling a validated XMP profile is the easiest way to gain higher real‑world speed without deep manual tuning.”

Real World Impact of Memory Latency on Performance

Measuring how fast memory answers requests reveals real gains more clearly than specs alone. I test common workloads to show what small changes mean for frame rates, load times, and multitasking.

What I found in practice: a modern DDR5 kit like the Corsair Dominator Platinum RGB 32GB 6000MT/s with a cas value of 36 performs very well in games and apps.

How to Test Your Memory Latency

I recommend two simple tools. For a quick health check, run Windows Memory Diagnostic by pressing Windows + R and typing mdsched.

For deep validation, use MemTest86. It has been trusted for over 20 years and catches timing-related stability issues that cause crashes or data corruption.

Real-world impact is usually modest: differences in delay often change gaming performance by about 1%–5%. The time your processor takes to access data depends on both frequency and primary numbers on the module.

“The number of clock cycles the module needs to respond is critical when you’re squeezing out every bit of performance.”

- Identical speeds can still vary due to sub‑timings and row access (trcd, trp).

- Lower cas helps, but the cpu and gpu typically matter more for overall performance.

- Use diagnostics to confirm optimal speed without instability.

Final Thoughts on Memory Tuning

I recommend enabling a validated XMP profile for most users. It gives an easy uplift without hours of manual work. Stability should come first; chasing the absolute lowest cas latency can risk data errors and crashes.

I still enjoy manual tuning for the challenge. If you dive deeper, focus on row active time, precharge time, trcd and trp to understand how access happens. That knowledge helps when you push a module beyond stock settings.

Remember: the number of clock cycles and real-world delay balance frequency with practical access time. Small gains are real but often subtle, so pick settings that deliver the best experience for your system and workload.

FAQ

What do I need to know about clock cycles when optimizing memory?

I look at clock cycles as the basic heartbeat that governs data transfers. A lower number of cycles for a specific operation usually means faster response, while higher frequencies can offset longer cycles. I balance cycle counts with operating speed to find the best real-world throughput for a given CPU and motherboard.

How does frequency relate to access delay and overall speed?

Higher frequency increases how often data can move per second, which often improves throughput. But each access also takes a certain amount of cycles; if those cycle counts are high, the benefit from faster clocks shrinks. I compare effective nanoseconds (cycles ÷ clock) to judge real latency, not just raw MHz numbers.

What exactly is CAS column address strobe and why does it matter?

Column Address Strobe refers to the delay between a read command and when data starts leaving the module. A lower value shortens wait time for a single column access, improving responsiveness in workloads with many small random reads. I view it as one of several primary numbers that shape perceived system snappiness.

Can you explain row active, precharge, and their impact on performance?

Row active time is how long a row must stay open to complete operations; precharge is the time needed to close it before opening another. If software frequently jumps between rows, long precharge or row active times can slow things down. I tune systems so row operations match typical access patterns for the workload.

What is command rate and how does it affect stability?

Command rate sets the delay between chip select and actual command execution. A tighter setting can shave microseconds from some accesses but may push modules or the memory controller toward instability. I increase the command delay when I see boot or error issues, then test stability under load.

How do SPD and XMP profiles help with tuning?

SPD stores manufacturer-recommended parameters; XMP offers tuned profiles for higher speeds. I use XMP to quickly reach rated performance, then manually tweak numbers if I need better latency or extra stability tailored to my CPU and board.

How much does memory access delay affect real applications and games?

The impact varies. Latency-sensitive tasks like high-frequency trading, databases, and some game engines benefit noticeably from reduced access delays. Bulk throughput tasks like file transfers or video encoding rely more on raw bandwidth. I pick settings based on which side—latency or bandwidth—matters most for my use case.

What tools do you recommend to test memory access times and stability?

I use a combination of synthetic benchmarks to measure raw access times and real workload tests for practical performance. Popular utilities include memory benchmark tools that report nanosecond timings and stress-test suites that reveal instability under sustained load. I always pair results with system monitoring for temperature and error logs.

Marcus is a Senior Hardware Analyst with over 15 years of experience in system architecture and PC building. Specializing in memory optimization and overclocking, he translates complex RAM specifications into practical, easy-to-understand guides. When he isn’t bench-testing the latest DDR5 kits for AllTopSoft, Marcus is likely tinkering with his custom liquid-cooled home server.